The convergence of Additive Manufacturing and Artificial Intelligence: Envisioning a future that is closer than you think

The frenzy of media attention surrounding Artificial Intelligence (AI) dwarfs the past hype surrounding Additive Manufacturing (AM). Whether you look to the future with fear or excitement, there is no escaping the wave of change that is coming. Whilst we once again hear words like 'revolution' being used – to which so many have become immune – Dr Omar Fergani believes that we are now at a crucial point of convergence for AM and AI. Here, he explains why AM is in an especially strong position to leverage the potential of AI, with the power to transform many areas of our industry, from part design to machine operation, quality management and beyond. [First published in Metal AM Vol. 9 No. 3, Autumn 2023 | 20 minute read | View on Issuu | Download PDF]

Much like the advent of electricity or the internet, the blending of Artificial Intelligence and big data is not just an incremental improvement. Instead, it represents a foundational shift that is reshaping the bedrock of our industries and society. As we harness this unprecedented convergence, we are not just building better tools – we are redefining the very essence of how we work, think, and live.

In this article, we will explore in depth how AI will impact the Additive Manufacturing industry, from a new wave of advanced design possibilities to autonomously controlling AM machines and ensuring part quality and repeatability. We will share insight gathered from those at the cutting edge of the convergence of AI and AM, whose voices are not often heard in the media but who promise to transform our industry through their research. We will also offer advanced use cases, insight into existing products and services and, by the end, hope to have presented a clear picture for AM experts, corporate decision makers, and engineers.

I believe that right now, rather than in the distant future, there is an opportunity for the AM industry to leverage the power of machine learning and deep learning models to bring a new level of efficiency to AM processes, operation and businesses, co-piloting Additive Manufacturing on the path to industrialisation.

True AI: a profound transformation

Over the past few years, we’ve witnessed the initial sparks of this transformation as AI models taught themselves to recognise faces, interpret languages, and even compose music, all by sifting through mountains of data. But we are just scraping the surface of what is possible. With each passing day, AI is becoming increasingly adept at learning from all types of data, delivering insights that until now have been impossible to generate.

I believe that a new industrial era – powered by AI and quality big data, along with impressive computational capabilities powered by cloud and quantum computing – will make the transition from steam to electricity seem minor in comparison. From healthcare to manufacturing, transportation to agriculture, no sector of the economy will remain untouched. Jobs that once seemed firmly in the realm of human creativity and intuition are now on the verge of being automated.

As a millennial, I’m amazed to live in this time of rapid technological change. Alan Turing’s early ideas about smart machines once read like science fiction, but now they’re all around us and becoming ever more capable.

We now find ourselves at another pivotal juncture, with AI’s potential magnified by its integration with that other groundbreaking technology with which we are all much more familiar: Additive Manufacturing. With AM, we find a technology ripe for the transformative impact of AI. Despite being an industry in relative infancy, AM has already demonstrated its potential, offering unprecedented flexibility in design and production, optimising the use of resources and reducing waste. However, we must not be so enamoured by its potential that we overlook the significant challenges standing in its path. The first set of challenges are efficiency, yield, and throughput. Traditional manufacturing methods, honed over decades, can deliver products at a scale and speed that current Additive Manufacturing processes currently struggle to match. For industries that rely on volume, this discrepancy can’t be ignored. The current slower pace of AM risks hindering its wider adoption in sectors where time is of the essence.

Yet it’s not just about the speed or volume. The promise of additive lies in its precision, material efficiency, the promise of part consolidation and part customisation. But, as with all new technologies, this promise comes with questions about the quality, repeatability, and, ultimately, the economic competitiveness of the process. While one additively manufactured component might meet all quality benchmarks, ensuring that the next thousand or ten thousand pieces meet those same standards is critical. Variability in factors such as material quality, machine calibration, and environmental conditions risk the introduction of inconsistencies in the final product. For industries such as aerospace or healthcare, where a minor defect can have grave consequences, this unpredictability is a concern.

Lastly, as with any new technology, the spectre of standardisation looms large. The AM industry is still relatively young, with a plethora of machines, materials, and processes vying for dominance. Without universal standards, there’s a risk of creating siloed ecosystems, where interoperability becomes a challenge. Manufacturers, regulators, and consumers alike need clear guidelines on what constitutes quality, safety, and reliability in the realm of Additive Manufacturing.

As we address these challenges, the burgeoning field of AI offers the potential for accelerated progress. AI, with its ability to analyse vast datasets, predict anomalies, and optimise processes, could be a key to help unlock the full potential of AM. By marrying the capabilities of Additive Manufacturing with the predictive power of AI, I believe that we stand on the cusp of a new era in manufacturing.

Design for Additive Manufacturing and beyond

It is widely understood within the AM industry that simply transplanting designs from traditional processes such as injection moulding to Additive Manufacturing misses the mark. Without tapping into AM’s design freedom, we’re not realising its full potential. This results in a situation where AM isn’t scaling as fast as it might because manufacturers aren’t seeing enough components that are designed for AM (DfAM). There is something happening here that is bigger than DfAM.

AI, and, specifically, the power of deep learning surrogate models, should not be underestimated when it comes to AM part development. The shift is more than just technological – it’s a reimagining of how we design, evaluate, and innovate. Traditionally, the product creation journey was iterative. Designers, using CAD, would create designs that simulation experts would appraise, critique, and refine using CAE’s analytical lens. Evaluations and adjustments would be made, often reconciling disparate file formats, and navigating the complexities of tools that were powerful yet computationally expensive.

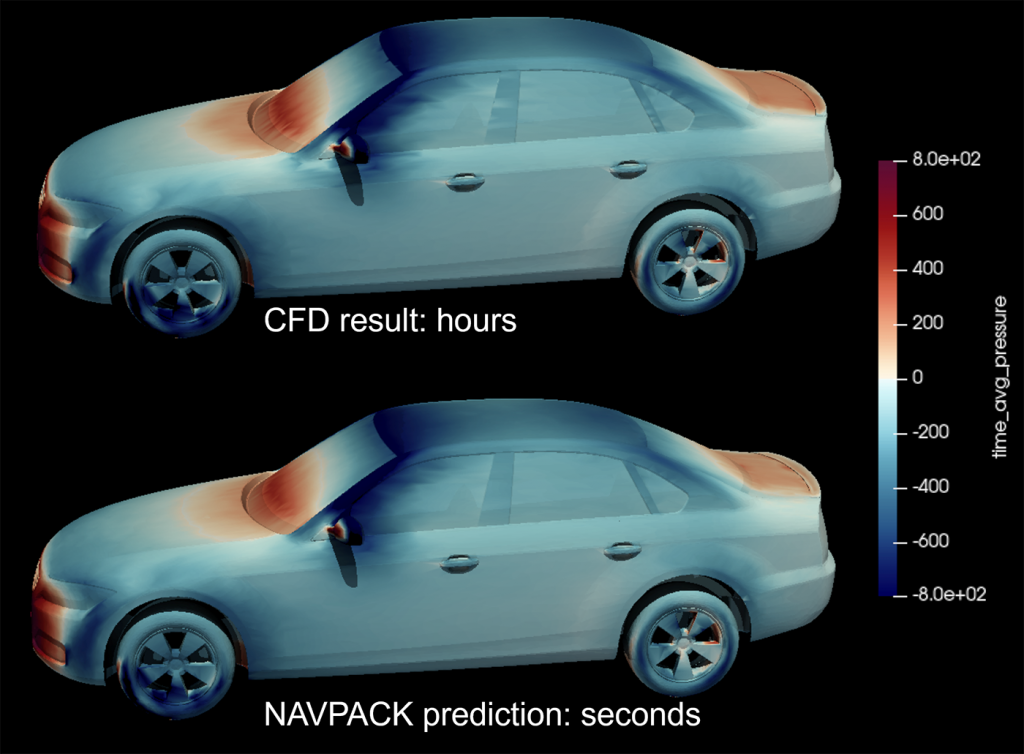

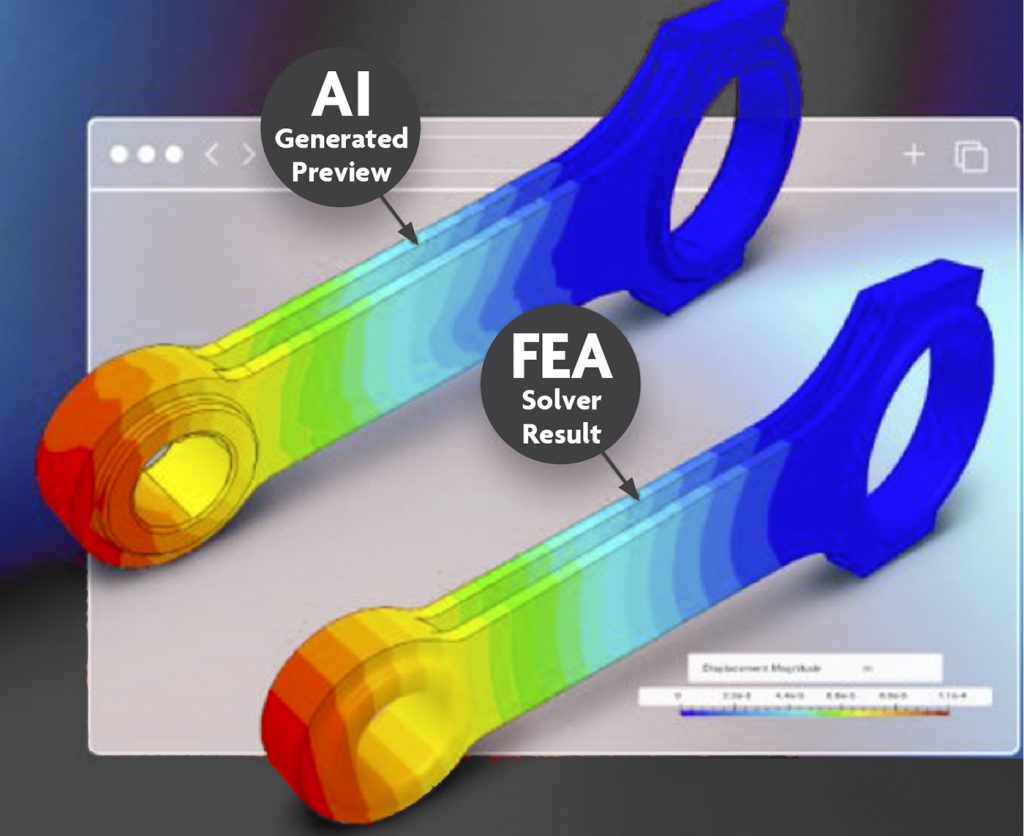

AI-driven surrogate models trained on quality physics-based data leveraging traditional CAE models can cut the simulation time from hours to seconds (Fig. 2). Using the generative nature of deep learning, they can adapt and iterate until identifying the optimal shape based on the product engineering requirements, generally based on the criteria of stress, fatigue, thermal behaviour, weight reduction, or any combination of these.

I spoke with Dr Matthias Bauer, CEO and co-founder of Navasto, one of the leading providers of accelerated AI. He commented, “In the realm of engineering, AI has significantly accelerated processes. I foresee that, within a year, every design and simulation solution will necessitate this technology; with a failure to incorporate AI comes the risk of obsolescence.”

Additive Manufacturing companies stand to gain substantially. By harnessing AI-accelerated engineering, they can fully explore the design freedoms inherent to AM. The potential for designing high-performance systems is clear: AI-accelerated simulations will enable engineers to more easily produce superior heat exchangers, lightweight structures, and advanced fluidic applications.

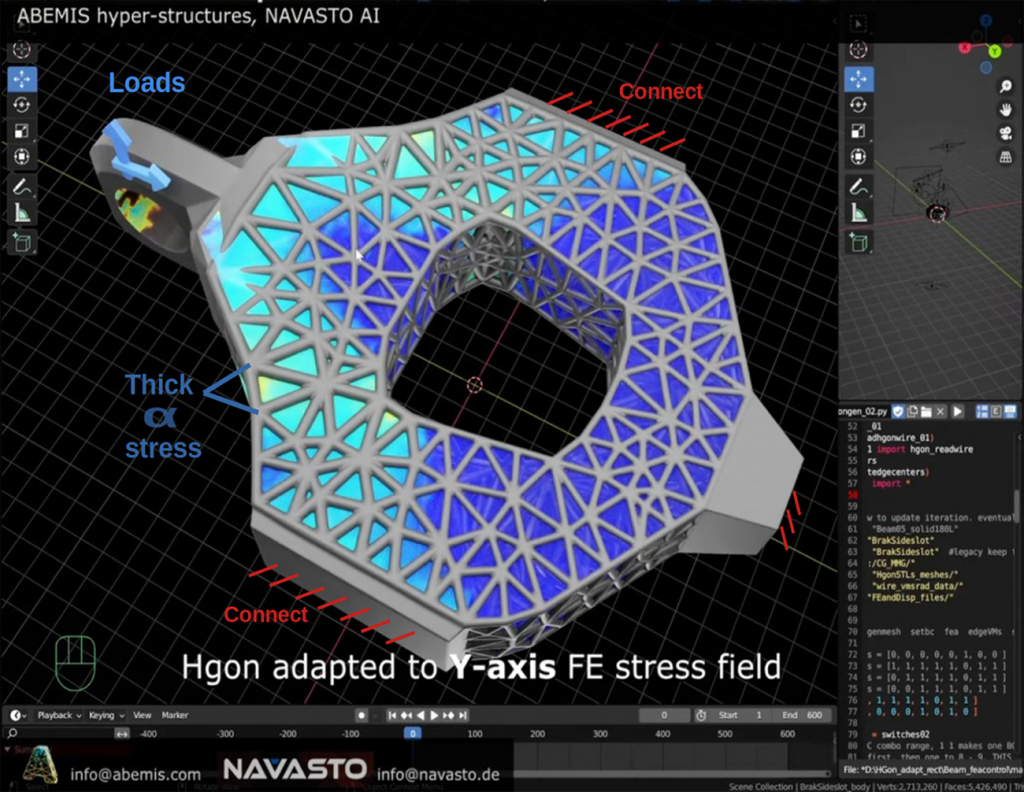

When it comes to lattice designs, Dr Todd Doehring, CEO of ABEMIS, recently shed light on a pertinent challenge that engineers often encounter. “Determining the correct meta-geometries for a specific application is often tricky because there can be a large number of parameters, conditions, and constraints.” This complexity has long been a bottleneck, particularly when working with advanced materials and complex geometries that defy easy categorisation or modelling.

However, Dr Doehring’s team found a groundbreaking solution by leveraging the AI capabilities of its partner, Navasto. The team successfully designed a high-performance, ultra-light, vibration-damping camera mount – a feat that was previously daunting due to computational limits (Fig. 3). “While we once relied on our empirical understanding of meta-lattice behaviour, or on CAE simulations that required many days of computation on powerful machines, we can now achieve results in seconds using AI simulations,” he elaborated.

This real-world application serves as a compelling case study for what AI-accelerated engineering can achieve, even in the most challenging of scenarios. Dr Doehring concluded, “This kind of speed-up is revolutionary and I’m convinced it will pave the way for widespread use of metamaterials and optimised hyperstructure components that are very computationally intensive to generate using standard FEA-based procedures.”

Contrary to the perception that AI-driven product development is a far-off future, the reality is that the transition is happening more rapidly than many anticipate. Leading aerospace and automotive design departments are in a competitive race to master AI technologies, particularly deep learning surrogate models. Their goals are clear: to significantly reduce their ‘time-to-market’ Key Performance Indicators (KPIs).

Take SimScale, for instance. This leader in cloud-simulation technology has already partnered with Navasto to offer AI-accelerated engineering solutions. Engineers can leverage these tools today to speed up their design and simulation processes, thus gaining a competitive edge (Fig. 4).

For the AM industry, the advent of AI-integrated design tools is especially impactful. Traditional computer-aided design (CAD) software has evolved to enable the design of complex lattice structures. However, the challenge has always been to understand the physics-based behaviour of these intricate geometries. Conventional approaches often require heavy reliance on experimental data, which is both time-consuming and resource-intensive to collect. AI-driven surrogate models can dramatically cut down this time, allowing for the rapid exploration of design spaces that were previously considered too complex or time-consuming to investigate. Moving beyond the limitations of complex physics-based behaviour or the necessity for cumbersome experimental data, with AI-accelerated simulations, the industry can quickly validate intricate designs, thus unleashing the true potential of design freedom that AM offers.

The accelerated pace at which these technologies are being adopted is creating a domino effect across sectors. As more companies adopt AI-based tools, those who delay risk falling into obsolescence, as noted by Dr Matthias Bauer. The technology is no longer just an enhancement; it is becoming a requirement for staying competitive.

The integration of AI into product development, and particularly into AM, is not a speculative future – it is a present day reality. Companies and industries that recognise this and act swiftly stand to gain significant advantages, from reduced time-to-market to the unlocking of previously unimaginable design possibilities.

Software-defined autonomous machines

Of all metal Additive Manufacturing processes, Laser Beam Powder Bed Fusion (PBF-LB) stands out as arguably the most commercially successful and widely available technology. However, the current hardware seems to have reached its operational limits. Modern PBF-LB machines are growing in size, with some accommodating up to twenty lasers in a 1 x 1 x 1 m volume. The resulting increase in machine complexity, while enhancing capabilities, also introduces the potential for operational issues. “The sophisticated interactions between components in AM machines challenge the consistency of performance and manufacturing stability.”

Moreover, the generation of toolpaths, pivotal for machine function, may not always result in the desired accuracy. Much currently used software can overlook the intricate relationship between energy, material, and the design’s geometry and the result can be uneven thermal stresses during production, leading to distortion, varied microstructures, and potentially a reduction in the final product’s mechanical strength. These inefficiencies lead to higher scrap rates and more frequent non-conformance in final parts.

Adding to these challenges, the combined complexities of both hardware and software – along with limited proactive control – risk further burdening engineers during part qualification and certification. Predicting the performance of parts – particularly large, critical and complex parts – is notably difficult given the nuanced interactions of machine components and software protocols. Any unpredictability risks lengthening qualification phases and complicates certification processes, resulting in an extended and more complex pathway to final part approval.

Given these challenges, the potential role of AI in this space becomes paramount. Could AI provide solutions to address these hardware and software intricacies, streamline the qualification processes, and bring about more predictable and efficient manufacturing outcomes?

One of the advances in this field is coming from 1000Kelvin, a company that I co-founded, which is building a next-generation AI-enabled software control platform for AM. Based in Berlin and Los Angeles, California, the firm is working closely with leading machine builders such as EOS and Nikon SLM Solutions to deploy its AI technology, AMAIZE, at scale.

Dr Katharina Eissing, CTO and co-founder of 1000Kelvin, is a theoretical quantum physicist who leads a team of seven mathematicians and physicists working on the development of AMAIZE. She has been convinced since the first day she learned about AM that it is the ideal use case for a deep learning-based control model. “Physics-based models and numerical simulations have been instrumental in the success of our civilisation. Integrating these models with a deep learning approach opens an entirely new domain of opportunities in terms of precision and speed,” she stated.

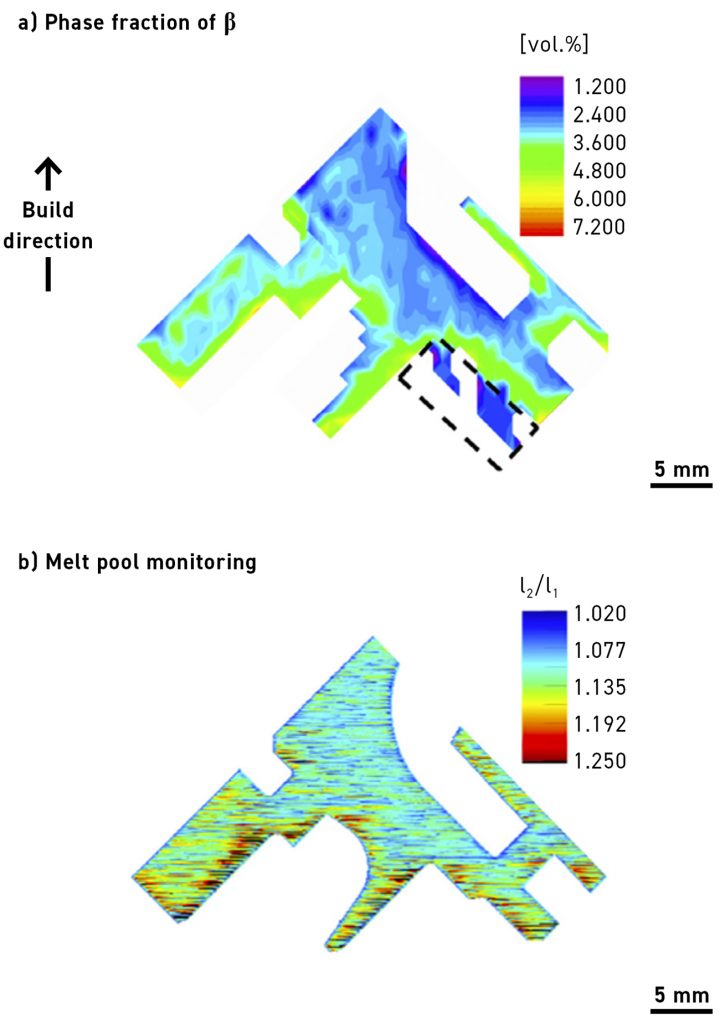

“Consider a one-metre high part. It would contain tens of thousands of slices and millions of vectors within its toolpath. Using academic-type simulations to understand the full part would, without exaggeration, take millennia. At 1000Kelvin we have for several years been developing a deep learning model to expedite these predictions, optimising thermal management and enabling our customers to produce higher-quality parts using the PBF-LB process with minimal iterations” (Fig. 5).

“This is extremely complex technology that requires superior understanding of the physics of the process, a massive amount of computing power, and a physical platform to test and validate these large-domain, expert AI models. From the start, we have been fortunate to work with leading space and industrial companies to validate our technology on their extremely complex parts and gain traction from day one,” stated Eissing.

The company has established a long-term strategic R&D partnership with the Brandenburg Technical University (BTU), Berlin, and the Chair of Hybrid Manufacturing, Prof Sebastian Härtel, giving it access to a world-class research facility that includes seven industrial grade PBF-LB machines equipped with all types of monitoring and materials characterisation technologies (Fig. 6).

“In collaboration with 1000Kelvin’s research and development team, we are actively exploiting the machine learning algorithms instantiated in AMAIZE. The recursive learning paradigms demonstrated by this Artificial Intelligence are nothing short of transformative. Currently, we are spearheading an initiative that employs this computational framework to deterministically forecast material properties. The implications of this technology are not merely incremental; they represent a paradigmatic shift in the field,” stated Härtel.

Access to these advanced models will unlock potential that goes beyond simply achieving accurate builds at the first attempt. The predictive software capabilities of the technology are already allowing customers from aerospace, industrial manufacturing, and service bureaux to reduce the amount of scrap, distortion and necessary support structures substantially. The software’s ease-of-use enables users to benefit from AI immediately – not at some distant point in the future. The predictive nature of the technology has other benefits, such as the ability to fix a user’s process upfront with a high level of traceability.

Furthermore, AI technology is enabling the ‘holy grail’ of digital manufacturing – that is, the concept of digital materials built on advanced understanding and control of processes; so, not just to build a 3D geometry, but to induce the desired properties in a localised manner (Fig. 7). On this front, the team at 1000Kelvin is already developing a proof of concept for the control of phases during the manufacturing of Ti-6Al-4V, a well-known problem in the aerospace industry.

Combining the ability to reduce engineering time and iterations through increased software automation, achieve accurate builds on the first attempt, minimise the need for support structures, and customise material properties will unlock the vast market potential for PBF AM. What might have seemed impossible just a year ago is immediately within reach. An AI-powered future for Additive Manufacturing will see increasing autonomy and, thus, better yield and quality. As AI models mature and improve in performance, they will become central to the systems of the industry, making future developments all the more interesting and providing powerful tools to help accelerate the scaling of AM.

Quality Assurance: AI watch

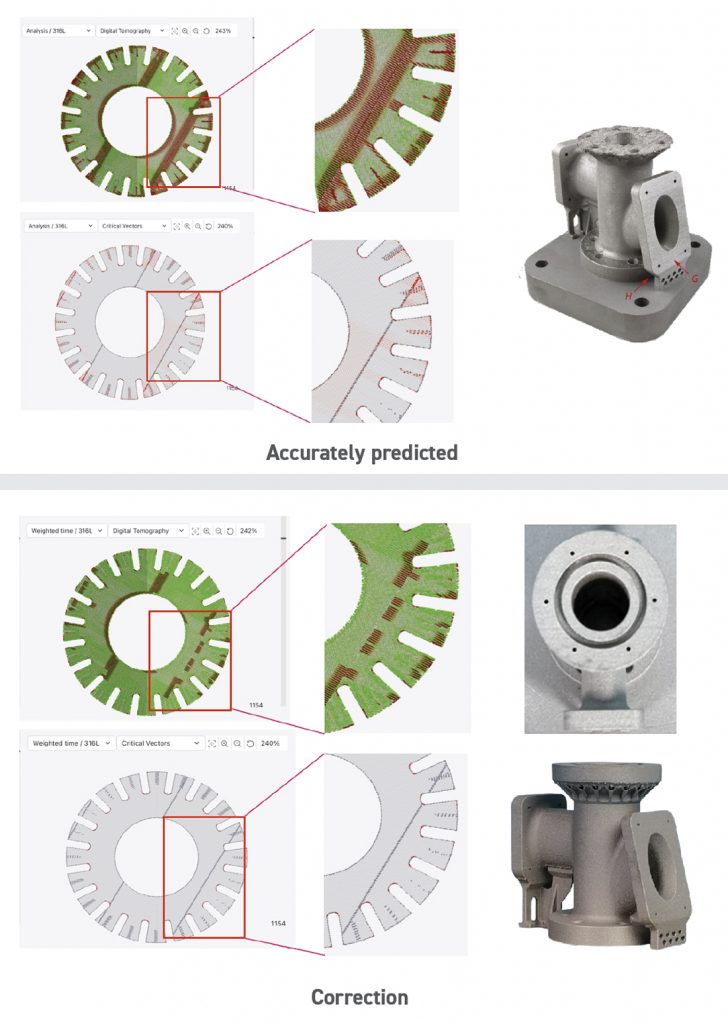

The maturation of Additive Manufacturing, particularly in metal-based PBF-LB, hinges not just on the sophistication of process technologies but also on robust quality control mechanisms. Although effective, traditional post-mortem techniques, such as X-ray tomography and ultrasound, introduce latency and resource inefficiency into the production cycle. AI-powered in-situ monitoring, leveraging advanced machine learning and deep learning algorithms, offers a transformative solution.

These AI systems provide real-time insights into the integrity and quality of the part being manufactured, allowing for immediate corrective actions and resource optimisation. By integrating sensor data and machine learning analytics directly into the manufacturing process, AI sets the stage for not only higher reliability but also for the operational agility required in next-generation manufacturing ecosystems. This essentially evolves AM from a ‘build-then-check’ to a ‘build-and-check’ paradigm, marking a significant leap in both performance and cost-efficiency.

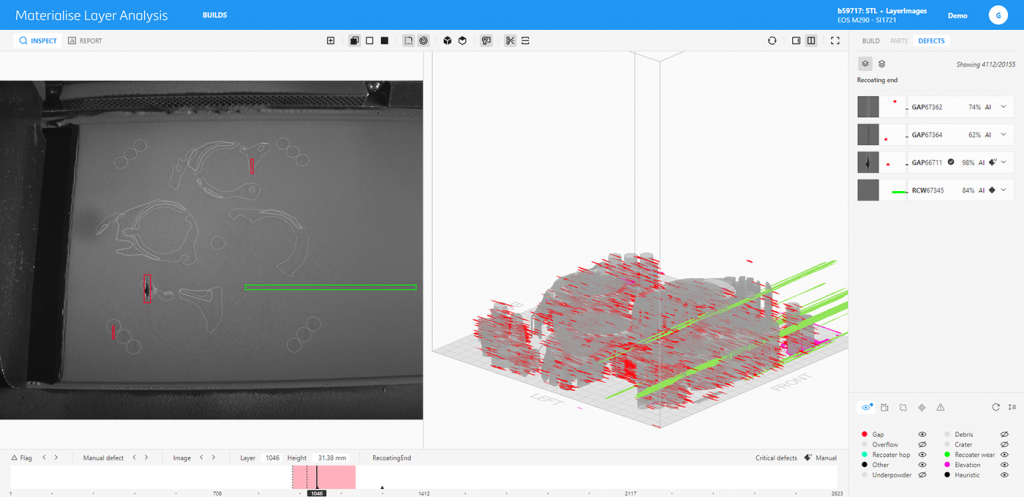

Moreover, commercially available image-based analysis tools from companies such as Materialise (Fig. 8), Zeiss (in collaboration with EOS), Addiguru, and Additive Assurance are accelerating this transformative shift. Utilising diverse machine learning methodologies, these tools offer turnkey solutions for real-time defect detection during the build process. They serve as critical decision-making instruments, enabling manufacturers to either halt a failing build – thereby mitigating the cost of failure – or enhance quality control measures dynamically. This further solidifies the role of AI in transitioning from build-then-check to build-and-check, adding another layer of performance and cost-efficiency.

The efficacy of this AI-driven in-situ monitoring is underpinned by process signatures – data amalgamated from machine control systems and an array of sensors. These process signatures serve as real-time health indicators of the PBF-LB process, enabling nuanced control over structural integrity and surface roughness. Ideally, a fully-realised in-situ monitoring system will promptly detect and rectify anomalies, thereby auto-calibrating PBF-LB process parameters or even the machine itself.

However, challenges persist. Correlating these process signatures with user-defined quality attributes for accurate anomaly and defect characterisation remains an open research question. The selection of sensors and monitoring techniques, particularly their spatial and temporal resolutions, is an ongoing debate among experts.

Moreover, the issue of measurement error is often underestimated, calling for a more rigorous approach to uncertainty quantification. Further complexities include the standardisation of measurements for precision, and the interpretation of data to assess the holistic health state of a PBF-LB system. There is an evident need for more focused research to comprehend the intricacies of adopting either on- or off-axis process sensing and monitoring, considering variables like accuracy, frequency, and spatial-temporal resolutions. In a recent comprehensive review of the state of the literature, Dr Tuğrul Özel, a full professor at Rochester Institute of Technology (RIT), New Jersey, USA, stated, “The long-term aims for in-process monitoring can include utilising machine learning and Artificial Intelligence to develop processes that are self-learning and intelligent, and also transferable from machine-to-machine.”

The role of large language models

Large language models (LLMs) such as GPT-4 are fundamentally redefining the way humans interact with technology, solidifying natural language processing as the new human-machine interface. The evolution of software development paradigms has reached a stage where the most intuitive programming language is increasingly becoming English itself. As the technology undergoes rapid advancements, these models are not just confined to language but are becoming multi-modal, capable of understanding and processing various types of data. Their fine-tuning capabilities allow them to adapt to specialised tasks, thereby making them invaluable across diverse sectors.

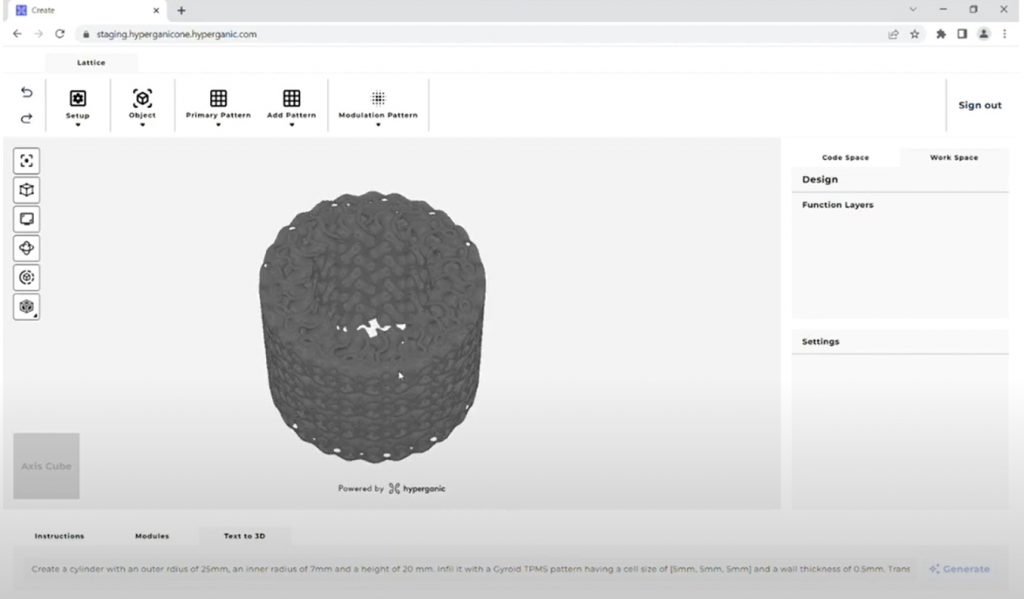

In the context of AM, companies are already leveraging these capabilities to solve industry-specific challenges. For instance, Hyperganic is actively researching how to leverage LLMs to make its algorithmic engineering design technology more accessible, removing the necessity for specialised software or coding skills, and thus broadening its user base. In a recent demonstration of a proof of concept during the CDFAM symposium in New York, Hyperganic demonstrated a direct human language interface supporting the systematic design of complex lattice structures (Fig. 9).

While LLMs offer groundbreaking capabilities for human-machine interaction, there’s a rising concern about the misuse or overextension of the term ‘AI’ in product labelling – particularly when the integration is minimal. Adding a chatbot interface powered by GPT to a product does not necessarily make it a transformative AI solution, especially in specialised fields such as AM, design, and engineering.

Before delving further, it is crucial to understand what multi-modality means in the context of LLMs. Multi-modality refers to the model’s ability to understand, interpret, and generate multiple types of data – such as text, graphs, numerical data, and 3D designs – rather than just text. This capability is vital for complex tasks in specialised industries. The challenge in Additive Manufacturing is not merely adding a chat-based interface, but involves rigorous fine-tuning of the model for the specific tasks at hand. This fine-tuning demands high-quality, specialised data – often requiring the model to master complex concepts such as multi-modality – in order to provide actionable insights or generate usable outputs. For instance, in AM, a simple chatbot function would be vastly insufficient for tasks like generative design optimisation, which could involve interpreting CAD files, material stress simulations, and natural language inputs, all in an integrated manner.

Given these complexities, the emergence of Text-to-CAX (Computer-Aided Design, Manufacturing, and Engineering) solutions is on the horizon. However, it comes with some caveats. Companies venturing into this space should be prepared for substantial time and resource investment to nail the true value proposition expected by customers. Therefore, while it’s tempting to jump on the AI bandwagon by merely integrating a chatbot into a product, stakeholders should be cautious. Such a simplified approach risks diluting the meaning of AI and could lead to customer disillusionment, particularly when the technology fails to deliver on substantial, industry-specific challenges.

A call to action: seize the AI-driven future of Additive Manufacturing now

The convergence of AI and AM represents more than an incremental upgrade – it is, in my view, a seismic shift, capable of reshaping industries. As we’ve explored in this article, AI is no longer confined to academic circles or niche applications. It is an enabling technology that’s infiltrating every aspect of AM, from design and simulation to quality assurance and beyond and is already being leveraged by leading companies within our industry.

We’re at a crucial inflection point. The challenges facing AM – efficiency, quality, and standardisation – are not trivial. Yet they are not insurmountable either, especially with the AI tools at our disposal. Companies such as Navasto and SimScale are already using AI to cut simulation times from hours to seconds, opening a huge world of opportunities to DfAM and reduction of time-to-market. Leveraging deep learning to optimise thermal management and enable predictive first-time-right outcomes improves part quality and reduces cost per part, allowing AM to be more competitive. Multiple monitoring companies are leveraging their understanding of data and reinforcement learning to enable in-process quality control. And the role of LLMs such as GPT-4 is just beginning to be understood; their potential to simplify complex tasks and democratise technology is vast.

Now is the time for action. Every stakeholder in the AM ecosystem, from engineers and designers to decision-makers and investors, is encouraged to recognise the transformative potential of integrating AI into their processes. The question is not whether AI will revolutionise AM – I can see that it is already happening. Those who embrace the synergistic potential of AI and AM have, I believe, the opportunity to place themselves at the forefront of an industry on the cusp of extraordinary change.

Author

Omar Fergani

CEO & co-founder at 1000 Kelvin GmbH

[email protected]

www.1000kelvin.com